For the last two years, the AI conversation has been dominated by one thing, new model releases. Every few months, there’s another announcement, a bigger model, a faster model, a more capable agent, a

new benchmark, a new demo. And to be clear, the progress is real. The models are extraordinary.

But inside enterprise production environments, something different is happening. The real bottleneck isn’t model capability. It’s that the model has no structured understanding of the environment it’s operating in.

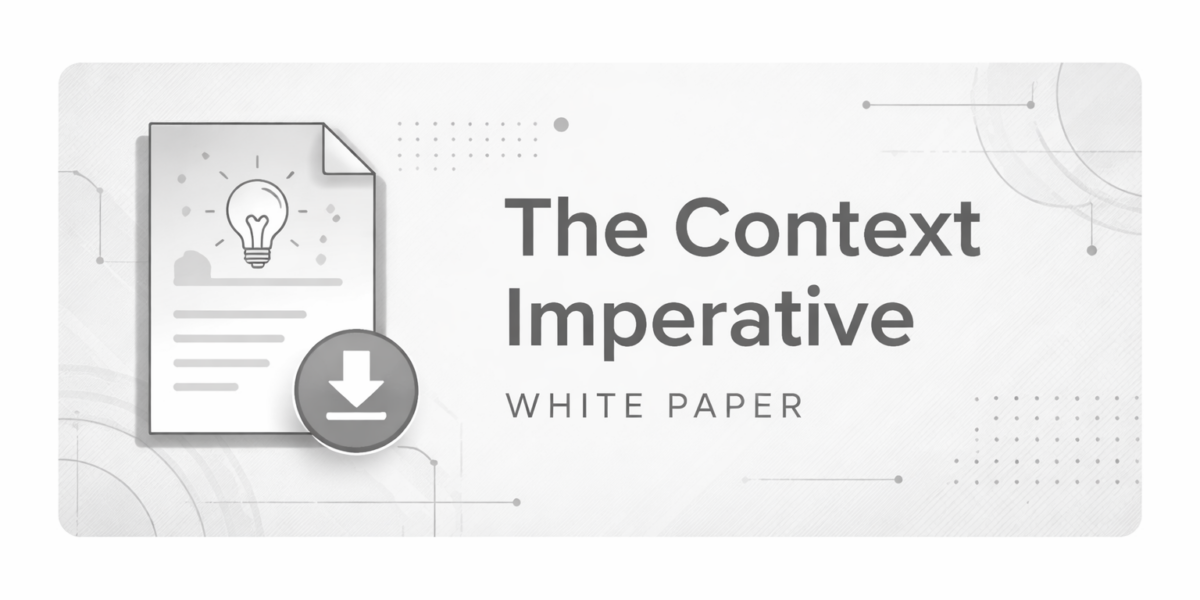

Most enterprises think about AI in fairly simple layers. There’s the model at the foundation.

On top of that, there’s the agent or an application and then users interact with whatever that system produces. Now framing makes sense but it skips over something critical. What’s missing is a structured living representation of the environment the AI is operating in. Your architecture, your dependency graph, your service boundaries, ownership policies, compliance constraints. Without that layer, the model is operating in fragments. Sure, it can retrieve documents, it can detect basic patterns, and it can generate code that looks correct but it doesn’t truly understand impact.

And that’s where the illusion of intelligence shows up. The output sounds right. It looks clean. It passes a surface level review.

But it’s reasoning without a living map of the system. In enterprise environments, that gap shows up quickly. Without structured context, here’s what we consistently see. Only about a third of AI generated code is accepted on the first pass.

Pull requests go through multiple review cycles before merging. Senior engineers spend double digit hours every week reviewing and correcting AI output. Architectural violations are caught late, sometimes in production.

Compliance checks happen after the fact, and many AI initiatives take a year or more just to break even. When you introduce real context infrastructure into the stack, the numbers change.

First pass acceptance rates jump dramatically. Review cycles drop by more than half. Senior engineer rework time shrinks to a fraction of what it was. Architectural violations are prevented at the point of generation, and compliance becomes continuous instead of reactive, and ROI becomes measurable within the first quarter.

The model didn’t change. The infrastructure did. The next phase of enterprise AI isn’t just about model upgrades. It’s about building the missing infrastructure layer that allows these models to operate safely, accurately, and at scale.

Because in production environments, intelligence isn’t measured by how fluent the output sounds. It’s measured by whether the system understands consequences, and that requires context.