The past two years of AI development have been defined by a race toward larger models and larger context windows. Model providers are now advertising systems that can process hundreds of thousands of tokens and, in some cases, more than a million tokens of context. These advances are meaningful. A larger context window allows a model to ingest more information during a single inference and maintain more of that information in working memory while generating a response.

However, increasing the context window does not solve the core problem facing AI in software development.

The real challenge is not simply how much information a model can read. The challenge is how to identify the right information across an engineering environment and deliver it to the AI system at the moment it is needed.

This distinction between capacity and relevance is increasingly important as organizations attempt to move AI coding tools beyond simple code generation and into real production workflows.

The Context Window Misconception

Many discussions about AI development tools assume that a larger context window will eventually eliminate the need for more sophisticated context management. The logic seems straightforward. If a model can process enough tokens, it should be able to read an entire codebase and reason about it.

In practice, this approach breaks down quickly.

Modern software systems are not single repositories or self contained applications. They are complex environments made up of many interconnected parts. A typical enterprise system may contain dozens or hundreds of repositories. Those repositories may represent microservices, internal libraries, infrastructure definitions, configuration files, documentation, and integration layers.

In addition to code, there are APIs, dependency graphs, deployment pipelines, policy definitions, observability tools, and operational playbooks. These elements evolve constantly as new features are added and systems change.

Even if a model could theoretically read all of this information at once, that does not mean it would know what information is relevant for a specific task.

Context windows increase the amount of information a model can process. They do not solve the problem of discovering the right information across a complex environment.

Enterprise Systems Are Not Giant Documents

One of the most common misconceptions in AI development is the idea that a codebase behaves like a large document.

Large language models were originally designed to process text. Many AI workflows therefore assume that information should be aggregated into prompts in a way that resembles a long document.

Software systems do not behave this way.

Codebases are structured networks of components. Files reference other files. Services interact through APIs. Libraries introduce dependency chains. Configuration files affect runtime behavior. Infrastructure code defines how systems are deployed and scaled.

When developers work on these systems, they rarely approach them as if they were reading a single document from beginning to end. Instead they rely on structural understanding.

They know which services interact with each other. They know which libraries are shared across teams. They know which parts of the system are fragile or tightly coupled. They understand how a change in one place might affect behavior somewhere else.

This knowledge allows engineers to reason about a system without constantly re reading the entire codebase.

AI systems need a similar form of understanding.

Why Bigger Prompts Do Not Solve the Problem

Consider a simple development task such as reviewing a pull request.

A code review requires more than reading the lines of code that have changed. A reviewer typically asks several questions.

Does this change break an API contract?

Does it introduce a dependency that conflicts with other services?

Does it violate architectural guidelines?

Does it affect performance characteristics in another part of the system?

To answer these questions, a reviewer may need to understand several pieces of context. These could include the surrounding code, the service architecture, dependency relationships, and historical design decisions.

Simply providing a model with a larger context window does not automatically surface this information. The model still needs to know where to look and how the pieces of information relate to each other.

This is why many organizations experimenting with AI coding tools report similar patterns. AI systems can produce impressive code snippets, but the output often requires significant human review before it can be merged safely into production systems.

The issue is rarely the syntax of the generated code. The issue is whether the code fits correctly within the broader system.

How Senior Engineers Actually Work

One useful analogy is the role of senior engineers within a development organization.

Senior engineers are not valuable simply because they can write code quickly. Their value comes from the knowledge they have accumulated about the systems they work on.

They understand how different services interact.

They recognize patterns that have caused problems in the past.

They know which dependencies are critical to stability.

They can anticipate how a small change might create unexpected consequences elsewhere in the system.

This institutional knowledge allows them to make better decisions with less information.

When a senior engineer reviews a change, they do not need to reread every line of code in the repository. They rely on mental models of how the system is structured.

The goal of enterprise AI systems should be to approximate this type of understanding.

Instead of treating a codebase as a static document, AI needs structured access to the relationships between repositories, services, dependencies, and operational constraints.

Context as Structured System Knowledge

When engineers talk about context in AI development, they are not simply referring to longer prompts.

Context refers to structured knowledge about how a system is built and how its components interact.

This knowledge may include information such as repository relationships, service boundaries, dependency graphs, API contracts, configuration policies, and infrastructure definitions.

When AI systems have access to this information, they can begin to reason about code in a way that reflects how software actually operates.

For example, if an AI agent is asked to modify a service endpoint, relevant context might include the API specification, the services that consume that endpoint, and the policies that govern how the endpoint should behave.

If an agent is reviewing a pull request, relevant context might include dependency relationships, architectural guidelines, and historical patterns that have previously caused defects.

In both cases, the key challenge is not increasing the amount of text the model can process. The challenge is selecting and organizing the information that matters for the task at hand.

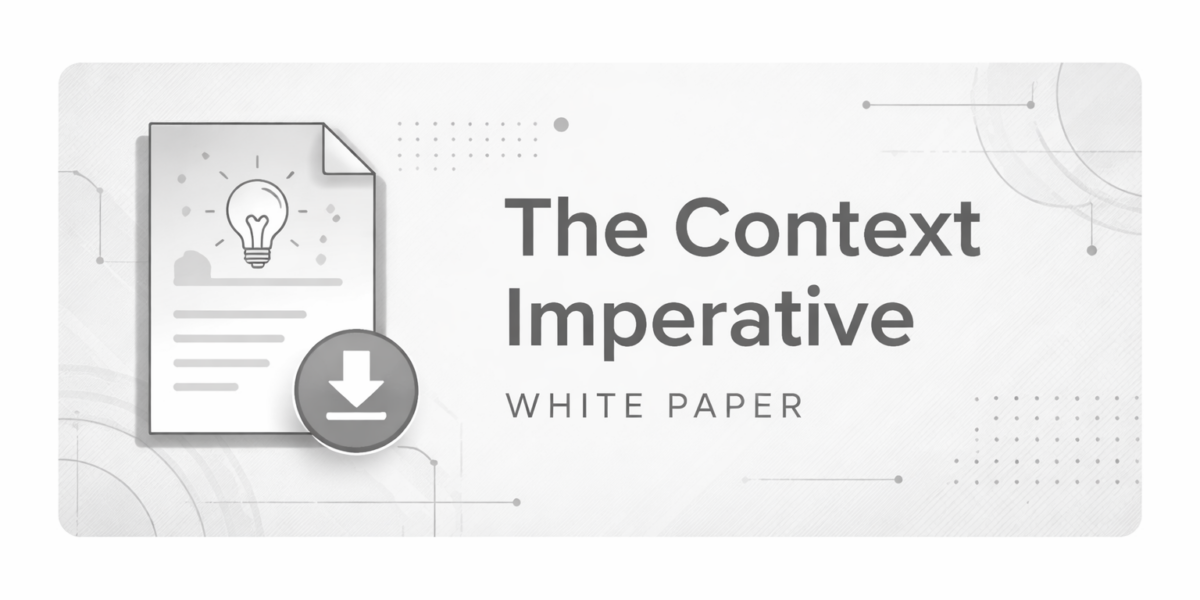

The Role of Context Engineering

This shift in thinking has led to the emergence of a discipline often referred to as context engineering.

Context engineering focuses on how relevant system knowledge is retrieved, structured, and delivered to AI systems. It involves building mechanisms that allow AI agents to access the information they need without overwhelming them with irrelevant data.

Several techniques are often involved.

Systems may build representations of repository structures and dependency graphs. They may track relationships between services and APIs. They may capture architectural rules that define how systems should evolve.

When a task is performed, the AI system receives a targeted set of contextual information derived from these structures.

This approach mirrors how human engineers work. Developers do not absorb all available information before solving a problem. They retrieve the specific knowledge that is relevant to the task they are performing.

Context Enables Better Reasoning

Providing structured context allows AI systems to reason about software development tasks more effectively.

Instead of generating code based only on patterns in the training data, the model can evaluate how a change interacts with the real system in which it will run.

This improves several aspects of the development process.

AI systems can perform more meaningful code reviews because they understand how changes relate to other services. They can identify dependency conflicts that would otherwise appear only during integration testing. They can detect architectural violations earlier in the development lifecycle.

These capabilities move AI beyond simple code generation toward system level reasoning.

Context and Trust

Trust remains one of the largest barriers to broader adoption of AI in software development.

Many engineering teams experiment with AI tools but hesitate to rely on them for critical workflows. Developers often feel confident using AI for small tasks but remain cautious when applying AI generated changes to production systems.

This hesitation is understandable.

Without system level context, AI outputs may be syntactically correct but semantically incorrect within the broader architecture. A piece of generated code might compile successfully while still violating design constraints or introducing subtle operational risks.

As a result, developers often treat AI generated code as a draft that requires careful human validation.

Providing AI systems with deeper context can reduce this gap.

When AI agents understand the architecture of the system they are operating in, they are better able to produce changes that align with existing patterns and constraints. They can anticipate the impact of modifications across services and dependencies.

Over time, this system awareness can increase developer confidence in AI assisted workflows.

Trust is not built by making models larger. It is built by enabling AI to reason about the real environments in which software runs.

The Next Phase of AI Development

The rapid improvement of language models has created the foundation for a new generation of development tools. However, model capability alone is not sufficient to transform software engineering workflows.

The next phase of AI development will focus on integrating models with the systems they are meant to assist.

This requires mechanisms that connect AI to the knowledge embedded across an organization. Code repositories, service architectures, dependency graphs, infrastructure definitions, and operational data all contribute to a complete picture of how software systems behave.

AI that can access and reason over this structured knowledge will be far more useful than AI that simply processes larger prompts.

The distinction between context window size and contextual understanding will become increasingly clear as organizations attempt to scale AI usage across complex environments.

Larger context windows increase the amount of information a model can process. Context engineering ensures that the right information is delivered to the model at the right time.

For enterprise software development, that difference matters.

Bigger context windows may expand the capacity of AI systems. But real progress in AI coding will come from giving those systems a deeper understanding of the environments in which they operate.