Understanding the limits of retrieval in real software systems

Over the past two years, retrieval augmented generation has become one of the most widely adopted patterns for building AI applications. It has been applied to chatbots, enterprise search, customer support systems, and increasingly to developer tooling. Many teams now assume that if they connect a model to their codebase using RAG, the model will gain meaningful understanding of how their systems work.

This assumption is understandable. Retrieval augmented generation represents a major step forward from purely pretrained models. It allows AI systems to incorporate up to date information, private data, and domain specific knowledge. It can dramatically improve factual accuracy and relevance when applied to the right kinds of problems.

But there is a growing realization among engineering teams that retrieval alone does not solve the deeper challenge of AI in complex software environments. In large codebases, the most important information is often not contained in a single file or document. It exists in the relationships between components. It lives in the architecture itself.

This distinction is becoming increasingly important as AI tools evolve from code completion and explanation into agents capable of making real changes to production systems. To understand why, it is useful to look closely at what RAG actually does, and what it fundamentally cannot do.

What retrieval augmented generation is designed to solve

At its core, retrieval augmented generation is a pattern for giving language models access to external knowledge. The process typically begins by taking a collection of documents, code files, or knowledge base entries and converting them into vector embeddings. These embeddings capture semantic similarity. They allow the system to search for content that is meaningfully related to a given query.

When a developer asks a question or an AI agent needs information, the system performs a similarity search against the embedding index. The most relevant chunks of text are retrieved and inserted into the model’s prompt. The model then generates a response based on both the query and the retrieved content.

This approach is highly effective for problems that are fundamentally about information retrieval. It works well when the answer exists in a document and the challenge is simply to find the right document. It is particularly useful for tasks such as explaining an API, summarizing a specification, or locating a function definition.

In these scenarios, retrieval provides the model with the context it needs to produce a correct and relevant answer. The system benefits from up to date knowledge without requiring retraining. For many applications, this is a major breakthrough.

However, software systems are not just collections of documents.

The difference between documents and systems

Modern software systems are networks of interacting components. A single service may depend on multiple other services, external APIs, data models, and shared libraries. Changes to one part of the system can have cascading effects elsewhere. These effects are often subtle and difficult to predict even for experienced engineers.

The knowledge required to understand such systems is distributed. It is expressed partly in source code, partly in configuration files, partly in build scripts, and partly in the collective mental models of the teams that maintain them. Some of it exists in architecture diagrams. Some of it exists in CI pipelines. Some of it exists in conventions that have evolved over years.

Retrieval augmented generation treats all of this as text. It retrieves fragments that look relevant to a query. But similarity search does not reconstruct the structure of the system. It cannot infer the full set of relationships that determine how components interact.

This is where many teams encounter the limits of retrieval based approaches.

Where retrieval breaks down in real codebases

Consider a scenario where an AI agent is asked to modify a service. A typical RAG pipeline might retrieve the service’s source files, related tests, and perhaps a README that describes its purpose. The model may then generate a change that appears reasonable within that local context.

What it may not retrieve are the architectural constraints that govern how the service interacts with the rest of the system. It may not understand that a particular API is a stable contract relied upon by other teams. It may not know that a change introduces a circular dependency that will break the build. It may not recognize that a data model is shared across multiple services.

These are not retrieval problems. They are graph problems.

They require understanding the topology of the system. They require reasoning about dependencies and constraints that are not always expressed explicitly in text.

When AI tools rely solely on retrieval, they often produce output that is locally plausible but globally incorrect. This is one of the main reasons why AI generated code frequently requires extensive human review before it can be safely merged.

Context as structured understanding

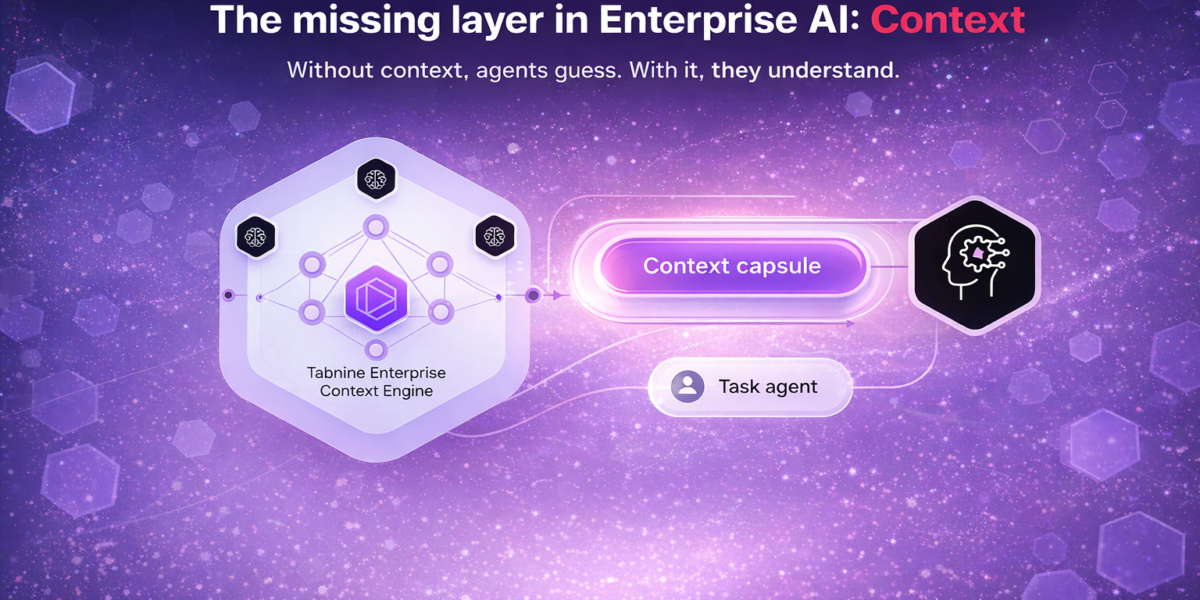

To move beyond these limitations, AI systems need more than access to documents. They need structured representations of the environments in which they operate.

Context in this sense is not simply a larger prompt or a longer context window. It is a model of the system itself. It captures relationships between services, APIs, data models, and policies. It encodes information about ownership, architectural boundaries, and operational constraints.

With structured context, AI systems can ask different kinds of questions. Instead of retrieving text that looks similar to a query, they can reason about the implications of a change.

For example, an AI agent could determine which systems depend on a given service. It could evaluate whether a modification is compatible with an existing API contract. It could detect violations of architectural policy before a change is even proposed.

These are reasoning tasks that depend on structured knowledge. They require the AI to operate on a graph of relationships rather than a set of documents.

The limits of similarity search

Similarity search is a powerful tool, but it has inherent limitations. It operates on the assumption that semantic proximity correlates with relevance. This assumption holds in many domains, but it breaks down in systems where the most important information is relational rather than textual.

In large codebases, the fact that two files share similar language does not necessarily mean they are functionally related. Conversely, components that are tightly coupled may not share obvious textual similarities. Their relationship may be encoded in configuration, runtime behavior, or historical design decisions.

As a result, retrieval pipelines can miss critical dependencies or surface information that is technically related but operationally irrelevant. This can lead to a false sense of understanding. The AI appears informed because it references relevant documents, but it lacks a coherent model of how the system actually works.

This gap becomes especially problematic as organizations begin to rely on AI agents for more complex tasks.

From autocomplete to system level reasoning

Early AI coding tools were primarily focused on autocomplete. They helped developers write code faster by predicting likely continuations. The risk associated with incorrect suggestions was relatively low because developers remained firmly in control.

Today, AI systems are being asked to do more. They are being used to generate entire features, refactor large portions of code, and automate parts of the development workflow. In some cases, they are being positioned as agents that can execute multi step tasks with minimal supervision.

As the scope of AI involvement increases, the importance of system level reasoning grows. It is no longer sufficient for the AI to produce syntactically correct or stylistically consistent code. It must also understand the consequences of its actions.

This is where structured context becomes essential.

Context infrastructure as the next layer

The evolution of enterprise technology often involves the emergence of new infrastructure layers. Databases enabled structured management of data. Cloud platforms enabled scalable deployment of applications. Observability tools enabled real time insight into system behavior.

In the era of AI, organizations are beginning to recognize the need for infrastructure that enables machines to understand the structure of the enterprise itself.

Context infrastructure provides a shared, continuously updated model of the organization’s systems. It integrates information from code repositories, build pipelines, architecture definitions, and operational policies. It transforms this information into a form that AI systems can query and reason over.

This approach shifts the focus from retrieving text to modeling relationships. It allows AI to operate with situational awareness rather than relying on fragments of information.

The path forward

Retrieval augmented generation will remain an important technique. It solves real problems and will continue to play a central role in many AI applications. But as AI becomes more deeply embedded in software development, the limitations of retrieval based approaches will become more apparent.

The next phase of progress will depend on integrating AI with structured representations of complex systems. It will require new ways of modeling architecture, dependencies, and organizational knowledge.

Ultimately, reliable AI development is not just about giving models more data. It is about giving them the right kind of understanding. In large software environments, that understanding must be rooted in the relationships that define how systems function.

Similarity search retrieves documents. Software systems are graphs.

Recognizing this distinction is the first step toward building AI tools that can operate safely and effectively at enterprise scale.